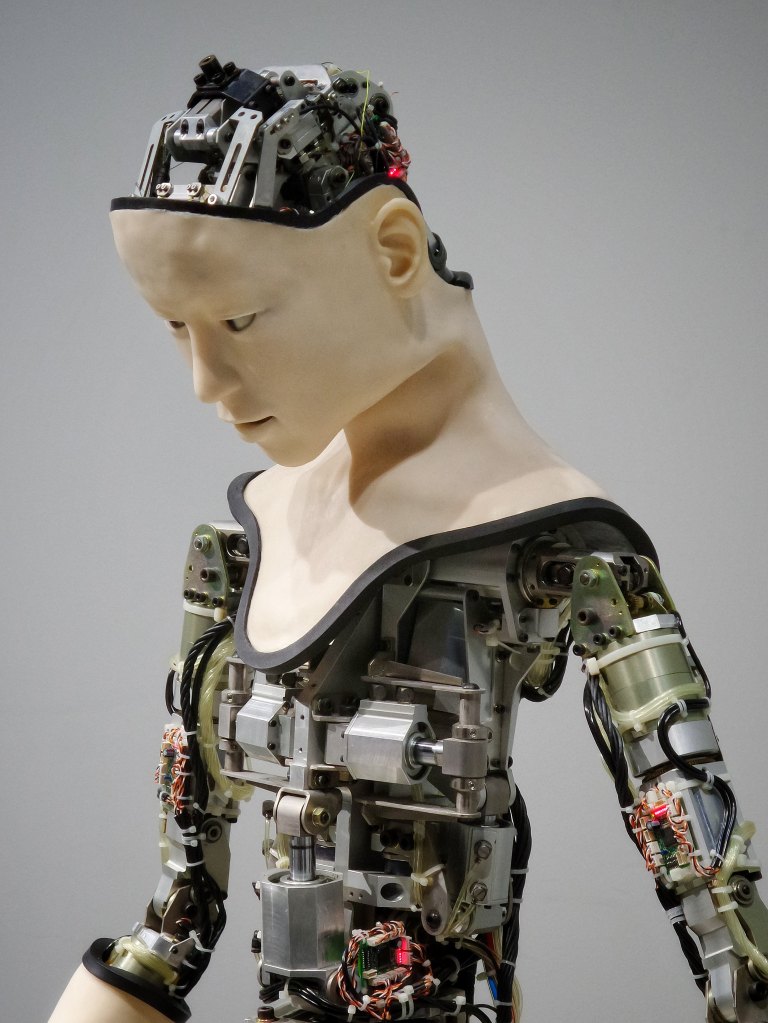

Horror movies love a good sequel. A self-referential genre, there’s a lot of give and take and reassessing. I may have waited a little too long to watch M3GAN 2.0, however. I remembered the premise of M3GAN: an AI robot companion built to keep a young girl company misreads its protocol and ends up killing people. I’d forgotten the details of how this came about, but as I watched the sequel, it started coming back. It might’ve been best if I’d rewatched M3GAN first, but weekends are only so long and I’ve got a lot to do. In any case, it isn’t bad. This is sci-fi horror, but the future it foresees doesn’t seem very far off now. So, M3GAN was destroyed at the end of the first movie. Her maker, Gemma, has become kind of a Neo-Luddite, such as yours truly, and is advocating for control of AI by the government. This need is underscored when a military application of M3GAN goes rogue and starts killing people.

Fighting fire with fire, Gemma decides she needs to bring M3GAN back to stop AMELIA. After the usual chaos and action, it seems that AMELIA is going to merge with the motherboard of the first AI system built, which is now super-smart, and will then wipe out the human race. M3GAN, however, has “learned” empathy and is able to stop AMELIA by sacrificing herself. The film doesn’t have a clear message, although overall it seems to advocate caution regarding artificial intelligence. On that I agree. (Of course, we’ll need to get some kind of actual intelligence in the White House before we can consider any of this.) This does seem less horror and more action than the original, but it goes quickly and is fairly fun to watch.

A few months before seeing this, I’d watched Companion, another AI cautionary horror movie. A few months before that, Ex Machina. Companion was a bit better, I think, but the original M3GAN was out of the gate first. Ex Machina, however, was even a decade earlier. The films are very different. Companion is about a sex-bot and M3GAN concerns a, well, companion for a lonely young orphan. Ex Machina is about an AI woman developed just because she can be. She, however, can’t be controlled either. All three films represent the zeitgeist of an underlying, lurking fear that we are really going the wrong direction with all the tech we’ve created. All feature female robots, and none of them end well for humankind. At least if the implications are followed through. It might not be a bad idea to pay attention to the human creative side when thinking about Actual Intelligence.