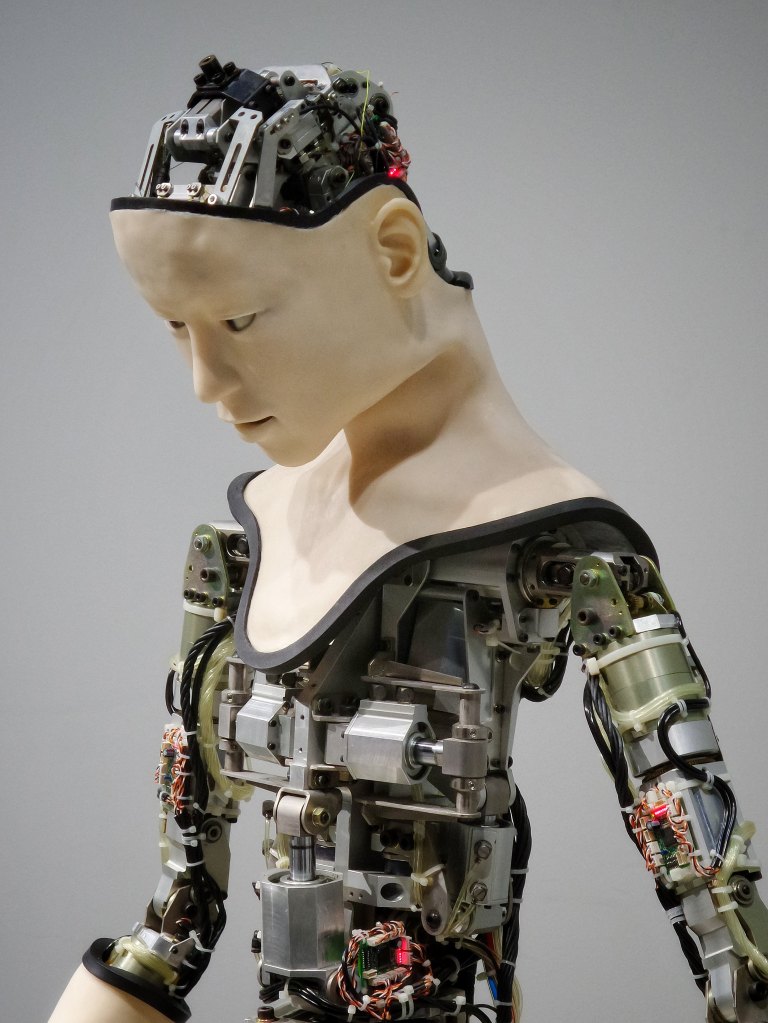

When I google something I try to ignore the AI suggestions. I was reminded why the other day. I was searching for a scholar at an eastern European university. I couldn’t find him at first since he shares the name of a locally famous musician. I added the university to the search and AI merged the two. It claimed that the scholar I was seeking was also a famous musician. This despite the difference in their ages and the fact that they looked nothing alike. Al decided that since the musician had studied music at that university he must also have been a professor of religion there. A human being might also be tempted to make such a leap, but would likely want to get some confirmation first. Al has only text and pirated books to learn by. No wonder he’s confused.

I was talking to a scholar (not a musician) the other day. He said to me, “Google has gotten much worse since they added AI.” I agree. Since the tech giants control all our devices, however, we can’t stop it. Every time a system upgrade takes place, more and more AI is put into it. There is no opt-out clause. No wonder Meta believes it owns all world literature. Those who don’t believe in souls see nothing but gain in letting algorithms make all the decisions for them. As long as they have suckers (writers) willing to produce what they see as training material for their Large Language Models. And yet, Al can’t admit that he’s wrong. No, a musician and a religion professor are not the same person. People often share names. There are far more prominent “Steve Wigginses” than me. Am I a combination of all of us?

Technology is unavoidable but the question unanswered is whether it is good. Governments can regulate but with hopelessly corrupt governments, well, say hi to Al. He will give you wrong information and pretend that it’s correct. He’ll promise to make your life better, until he decides differently. And he’ll decide not on the basis of reason, because human beings haven’t figured that out yet (try taking a class in advanced logic and see if I’m wrong). Tech giants with more money than brains are making decisions that affect all of us. It’s like driving down a highway when heavy rain makes seeing anything clearly impossible. I’d never heard of this musician before. I like to think he might be Romani. And that he’s a fiddler. And we all know what happens when emperors start to see their cities burning.