Corporate logos are among the most instantly recognizable symbols in the world. Even in “developing” countries, kids know what the golden arches represent. Not a real fan of large corporations, I still buy things not knowing who the manufacturer is, if it is something I need. I find the frenetic need of non-profit organizations—even colleges and universities—to “brand” themselves vulgar and distasteful. Why do those who truly have something to offer feel like they have to snuggle up to Wall Street and its resident demons? Still, the corporate logo has a way of drawing attention to products. And sometimes we look for more significance in them than they actually have. Keep in mind corporations’ goals are merely to separate you from your money. Often it doesn’t take much thought.

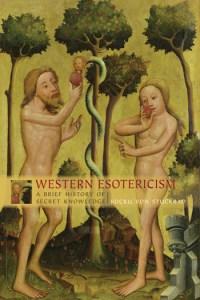

When I was a child I thought the golden arches were supposed to be french fries. And when I started to use computers—always Apple—I wondered if their logo might not be the most infamous bitten apple of all, the apple of Eden. Forbidden knowledge. It seemed to fit perfectly. Too bad it’s incorrect. Interviews with Steve Jobs, Steve Wozniak, and various marketing designers have revealed that the Bible had nothing to do with it. The original Apple logo was Isaac Newton under an apple tree with the apocryphal fruit falling toward his head. It was felt that this detailed and complex logo didn’t have the instant recognition that a trademark requires, and so a marketing firm came up with the apple we all recognize. Initially it was a rainbow apple, but now the mere outline tells us what we need to know.

But what’s with the bite mark? Surely that must be a throwback to Eden? No, apparently not. We don’t know that Newton ate his apple, but a stylized apple looks a lot like a stylized cherry. The bite mark was added to the logo for scale. You don’t want to confuse the buyer. Corporate logos are markers that say, “place your money here.” Non-profit organizations used to exist to provide valuable services—services that couldn’t be rendered in matters of dollars and cents. Now there is no other way to show value. We have followed the false idol of corporate thinking and the only way we can imagine to draw attention to what we offer is to brand ourselves. So it has always been with cattle, where branding was much more obvious. Yes, those who follow corporations should remember that the brand began with red-hot iron and it left an indelible scar. Of course, I’m writing this on an Apple computer.